Reddit web scraping is the process of collecting publicly available data on the platform. Data collected may include posts, profiles, comments, and more. Manually harvesting Reddit data can be time consuming, error-prone, and inefficient. Therefore, researchers, marketers, and SEO professionals often automate Reddit scraping with bots or scripts. Managing multiple Reddit accounts for scraping may require the use of a proxy. To facilitate data extraction, Reddit has a public API. However, your options are very limited when you scrape Reddit with API for a couple of reasons. First, the API implements rate limits, which restricts the number of requests you can send within a time frame. Additionally, it often requires authentication details, which may slow down the entire process.

How Does a Reddit Scraper Work?

To scrape Reddit data, the scraper sends HTTP requests to the platform. The next phase involves parsing HTML or JSON responses. Then, the required elements like user ID or comments are extracted. Finally, the extracted data is cleaned and stored in a pre-defined format. Publicly available data that you can scrape on Reddit includes:

- Post titles

- Usernames

- Comments (threads and responses)

- Scores ( upvotes and downvotes)

- Subreddit metadata

- Timestamps and edit history

Some of the challenges associated with using a Reddit scraper include CAPTCHAs, blocked IPs, nested comment sections and rate limits. This is where optimized tools play a crucial role in taking your scraping activities to the next level.

Where to get Reddit Scraper

Scrapers can be broadly categorized into 2 (two) groups – code and no-code scrapers. The code scrapers utilize a programming language like Python, CSS, Java, etc. to create a script that automates data extraction. This option requires some coding skills and technical expertise to write code that can bypass CAPTCHA and rate limits for effective data extraction. You can also write a script for a Reddit image scraper if the goal is only to extract images on the page.

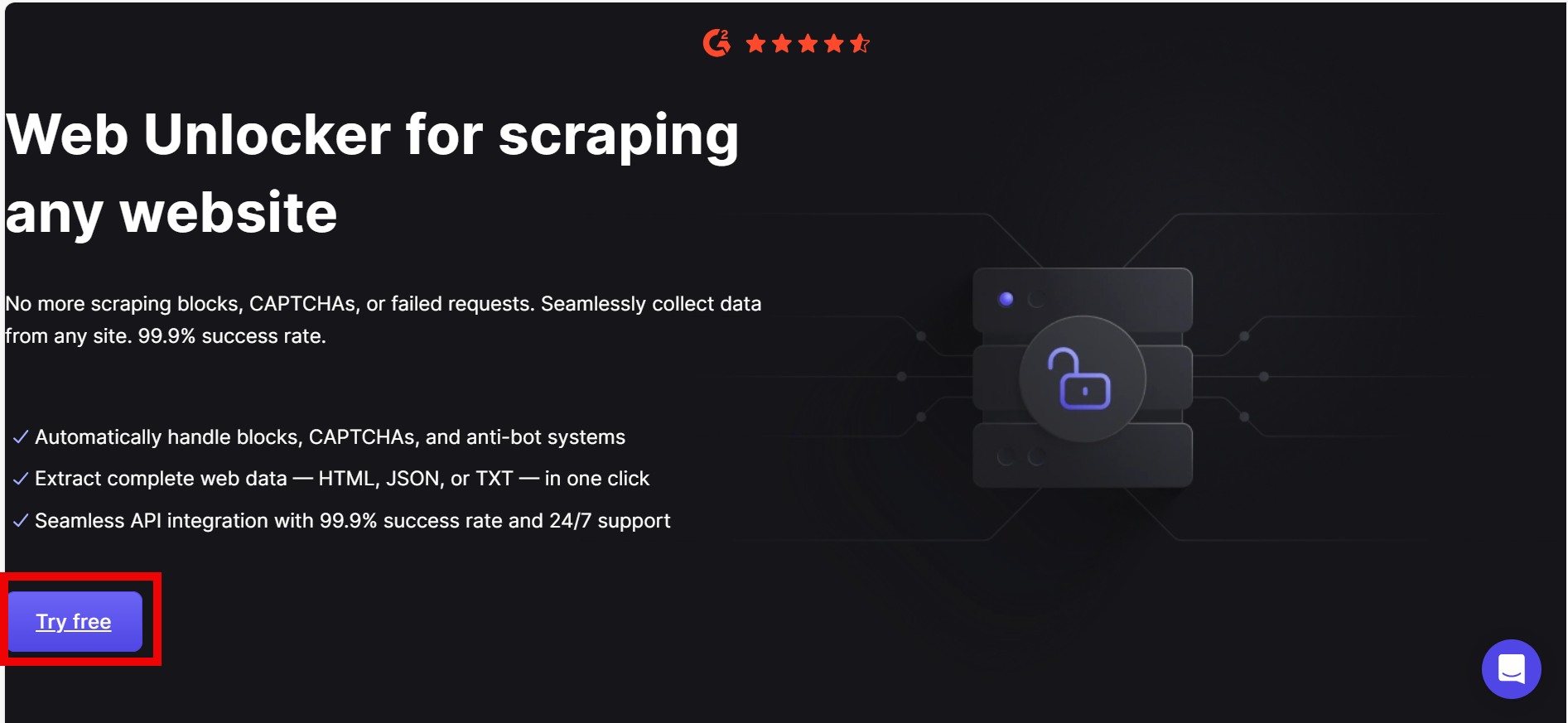

On the other hand, the no-code option is a solution that does not require coding or a broad understanding of programming languages. For those seeking an easy option regarding how to scrape Reddit, the no-code tools come in handy. They come with different features that allow you to extract data from platforms like Reddit in a few steps. One of the most reliable places to get this no-code scraping solution is Floppydata. This tool optimizes the process of getting data from Reddit with features that bypass CAPTCHA and IP bans for a smooth experience. The Web Unblocker tool from Floppydata is excellent for scaling large volumes of data without the need for automation browsers like Selenium, Puppeteer, or Playwright.

Floppydata’s Web Unblocker is a tool that simplifies web scraping by bypassing blocks and CAPTCHAs automatically, letting you collect data without needing browser automation or manual proxy setup. Some of its features include:

- Automated CAPTCHA solving

- Bypass anti-bot mechanisms with its advanced browser fingerprinting

- Extracts data from dynamic websites efficiently

- Built-in automatic proxy rotation and retry logic to remain anonymous.

Another thing to consider when getting a scraper is pricing. Floppydata is committed to providing high-quality solutions at affordable rates. Therefore, Floppydata offers its Web Unblocker at highly competitive pricing. Although there is a free trial, it is limited to 5 (five) scraps. There are four pricing tiers and they include:

- Growth Plan – From $0.98 per 1k results

- Professional Plan – From $0.75 per 1k results

- Business Plan – From $0.60 per 1k results

- Premium Plan – From $0.45 per 1k results

- Custom Plan – Contact customer support for a customized plan

Pros of Floppydata’s No-code scraper

- Non-technical users can use it with ease

- Comes with built-in features that handles IP rotation, CAPTCHAs, and dynamic contents

- Delivers data in formats like CSV or JSON for easy processing

- Cost-effective options for individuals as well as SMEs

- Fast data delivery

Cons

- Limited flexibility for customization

Other Alternatives to Scraping Reddit Data

Let us briefly analyze other methods that can be used to scrape Reddit data:

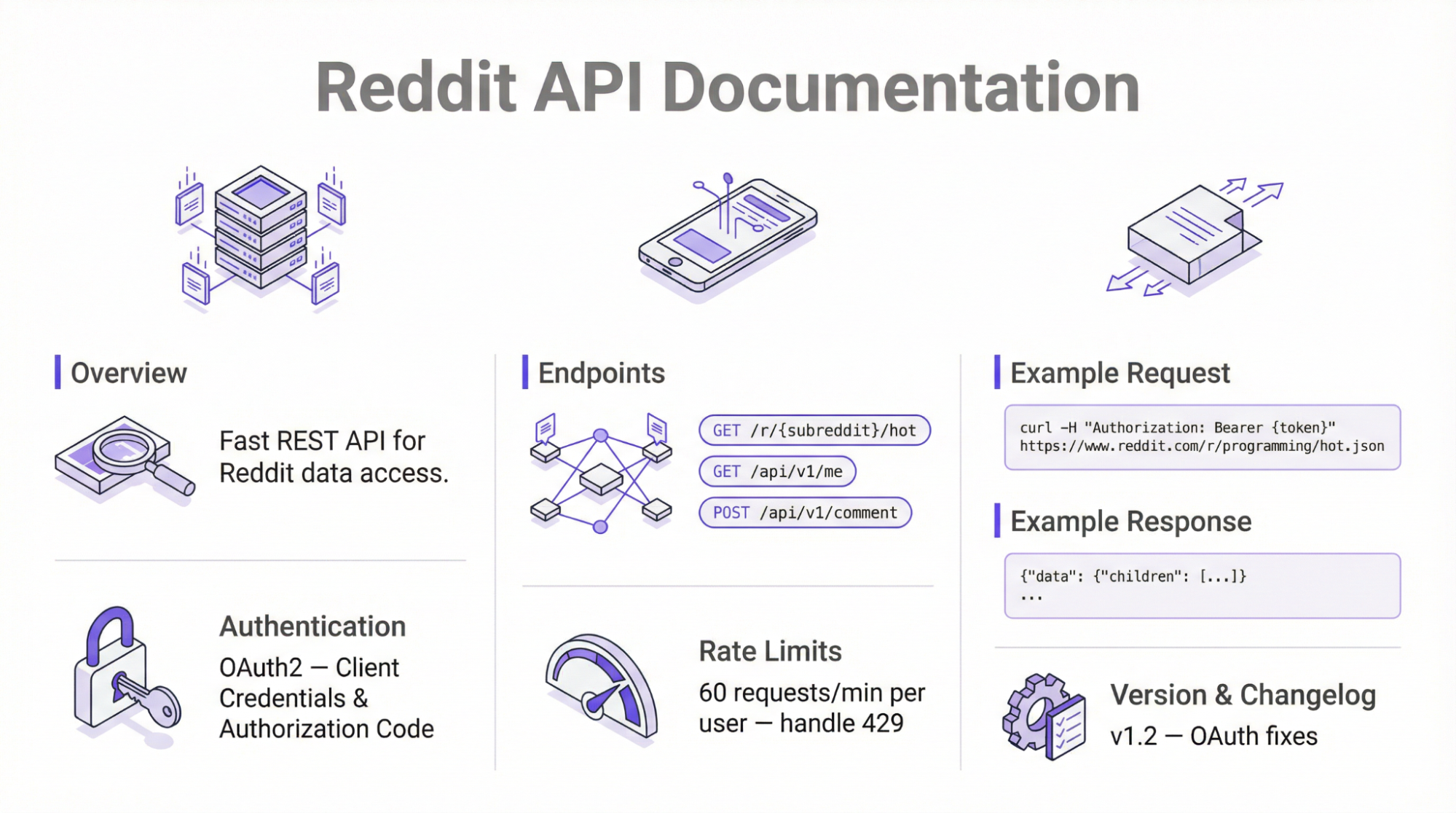

Reddit’s official API

If you want to scrape the platform without third-party tools, then you should consider using the official API. This approach uses Reddit’s JSON API endpoints to scrape publicly available information using simple HTTP requests.

Using the official API requires OAuth2 authentication and enforces the rate limits – 100 QPM for free tier. According to policy updates rolled out in 2025, the API requires manual approval from the platform before data retrieval.

Follow the steps below to use the Reddit official API for data collection:

- Register to get API access

The first step is to log in to your Reddit account or create one if you are a new user. Visit the Reddit Apps page, click on “create an app” and follow the instructions on the screen. Once this is successful, a new window will pop up with your client ID, client secret, and user agent string.

- Authentication

Use OAuth to get an access token. For this step, you can either use a programming library, such as Python’s PRAW library, and authenticate with the API. Alternatively, you can use the request library, which involves manual handling of the access tokens.

- Make HTTP Requests

The next step is to use a framework such as the Python request library to send GET requests to various endpoints.

- Parse and Save Data

The raw data is extracted and processed in JSON format for easy readability.

N.B: The process described above is only efficient for simple scraping tasks. In addition, it requires a good knowledge of programming languages and coding.

Pros of Reddit Official API

- It provides official and reliable access to Reddit servers to collect data

- It is a complaint to the platform’s terms of use

- Minimal risk of bans provided you don’t exceed the rate limit

- Supports extraction of publicly available data without anti-scraping restrictions.

Cons of Reddit Official API

- Data is very limited

- Not suitable for those with little or no coding skills

- Rate limits make it challenging to scale the scraping process

- Higher volume data extraction can be quite expensive

Using Python

Python is a programming language with an extensive library that supports web scraping. For Reddit scraping, most developers use PRAW (Python Reddit API Wrapper) to interact with the server to extract data.

However, to extract data beyond the API limits, frameworks like BeautifulSoup are used. They also play a crucial role in parsing HTML data and delivering it in XML or JSON format.

Reddit’s structure frequently changes, which may affect the performance of the scraper. Therefore, the scraper needs to be regularly updated to adapt to modifications on the platform.

Best practices for using a Python Reddit scraper

Rotate IP Address

One of the main purposes of rotating IP addresses is for anonymity. In addition, the platform can detect when repeated requests originate from the same IP address. This can trigger an IP ban, which makes it difficult to complete data collection

CAPTCHA Handling

A Python Reddit scraper is not equipped to handle CAPTCHAs, which can pop up as a way for the platform to differentiate human and bot activities. To bypass CAPTCHA, use headless browsers like Selenium, Playwright, and Puppeteer to mimic the human browsing pattern. Subsequently, this makes it more difficult to detect automated activities.

Use Headless Browsers to Handle Dynamic Content

Similar to modern websites, Reddit uses JavaScript to load dynamic content. Regular scrapers usually only parse HTML content and may be unable to load dynamic content. One way to handle this is to integrate headless browsers like Selenium

Pros of Using Python

- Since it is an open-source library, it is free

- Highly customizable

- Reliable

Cons of using Python Scrapers

- Requires Python programming knowledge

- Limited to public data

- Restricted to Reddit’s API limits

Use Cases of Reddit Data

Reddit web scraping provides access to data that can be used for various purposes. Some of the most common use cases are:

Market Research

Many professionals collect Reddit data for market research. The information collected can be sorted and analyzed to understand market sentiment, current trends, and different brand reputation. Therefore, the data can be interpreted into decisions that influence product ads, packaging, and pricing that suits the target market.

Financial Analysis

Most people are unaware that there is a whole community of financial experts on Reddit. From financial topics involving stocks, shares, cryptocurrency, and international markets, Reddit holds a large volume of data. Financial analysis is necessary before making any significant investment to minimize risk of loss. This data can be extracted, analyzed, and interpreted to understand the market forecast and make informed financial decisions.

AI and Machine Learning

Data from Reddit can be used to train LLMs (Large Language Models) to improve AI-driven search results. AI bots can only perform as much as the data it receives. Therefore, Reddit’s daily large number of visitors makes it an ideal source of data. An example is Reddit’s native AI, an LLM that is fed with data from the platform to provide personalized pages for each visitor.

Social Research

Another use case of Reddit data is social research. Collecting subreddit data can be used for studies on the pattern of human interaction. Social research can be used to gather data regarding trends and opinions on topics like online privacy and consent. Additionally, the data can be used to train chatbots to provide 24/7 support that may not be feasible with human support agents.

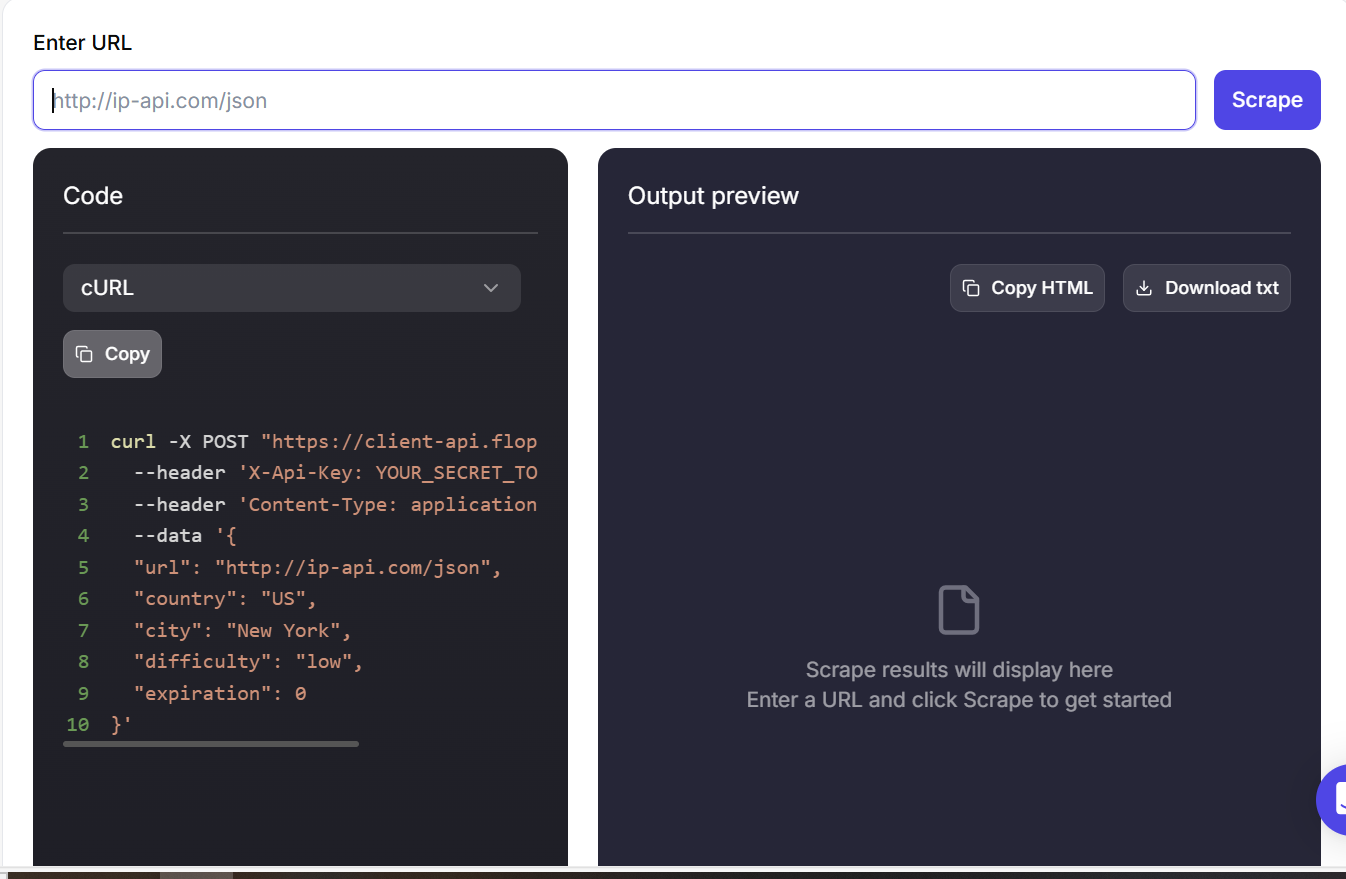

How to Scrape Reddit Data with Floppydata Web Unblocker

Using Floppydata’s Web Unblocker as a Reddit scraper is easy and can be achieved in a few steps. Here is a step-by-step guide on how to scrape Reddit data with Floppydata.

Let’s get into it!

Step 1: Visit the Web Unblocker page and sign in to get started

Step 2: Log in to your Reddit account. Open a search results page with specific filters that align to your use case.

Step 3: Go to the Floppydata’s Web Unblocker dashboard and paste the URL

Step 4: Your results are ready within a few minutes.

Conclusion

Learning how to scrape Reddit data can be a powerful asset for researchers, individuals, and companies. It is a way to gather insights from one of the largest global communities on the web. To collect this data, you can either write a script with a programming language or use a no-code solution.

Floppydata offers a comprehensive no-code scraping solution – Web Unblocker, that effectively extracts data from Reddit and delivers it in your preferred format. New users get up to 5 free sessions for collecting data from any platform.

Want an easy and seamless experience with scraping Reddit data, try Floppydata’s Web Unblocker today!

FAQ

How to scrape Reddit data?

Use Reddit API, scraping tools, or automation scripts to collect posts, comments, and metadata. Floppydata simplifies the process with reliable proxies and automated data extraction.

What are the challenges?

Reddit has strict anti-bot systems and rate limits, making scraping harder. Floppydata overcomes these with clean IP rotation and high success rates.

Share this article:

Table of Contents

Proxies at $1

Get unlimited possibilities